Somewhere in Germany there is a securitized data server that holds one of the world’s most comprehensive archives of propaganda videos produced by the so-called Islamic State (ISIS), an extremist jihadist militant group that gained international notoriety in the mid-2010s for its sensationalist use of media violence to spread its ideology. The German archive of ISIS propaganda was created mainly by one young researcher whose job was to collect and watch every ISIS video he could find and generate descriptions and metadata for each video to facilitate further analysis.#ISIS, #propaganda, #dispassion

After some weeks involving hundreds of hours watching these videos, the researcher began to experience anxiety, nightmares and thoughts of self-harm. Through therapy he devised different tactics to continue his work. One was to don a white lab coat to inhabit the persona of a scientific researcher in order to distance himself from the material while generating data about it. But when he encountered a particularly troubling image, he would adopt a different approach and imagine himself inside the scene to actively change the outcome, even imagining his closest friends alongside him for additional support.#laboratory, #dispassion

These two modes of interacting with an image – detached analysis and active transformation – resonate with two modes of artificial intelligence technologies in contemporary visual culture. As described by researcher Antonio Somaini, there is analytic AI in which images are situated within datasets for analysis, and generative AI that creates new visual content by transforming existing data. While the two processes are inextricably linked, they distinguish two dimensions of algorithmic engagement with images and hint at how the analysis of images leads to the generation of new ones.#AI, #Antonio Somaini, #dispassion

Image analysis-as-image production: this also describes the practice of video essays, in which audiovisual media is repurposed to analyze and reflect critically upon itself. To avoid copyright infringement claims, video essays have often cited fair use policies that justify the use of existing material if it has been sufficiently transformed. In the case of video essays, that transformation is performed primarily through critical analysis, producing new insights that give the work a new meaning distinguishable from the original. Crucially, this is a recursive process in which existing images are turned back upon themselves, dissected and reassembled in ways that expose their inner workings and generate new understandings. In this way it invites comparison to generative AI, using analysis as a form of production.#video essay

In what follows, I reflect on my own encounters with ISIS media and explore how technologies of image analysis have shaped – and sometimes distorted – my engagement with violent content. First, I recount how I developed distancing strategies to critically process an ISIS propaganda video and how these strategies mirrored machine learning techniques. Then I explore how individuals and systems negotiate the affective burden of engaging with disturbing content, and how power structures shape who bears that burden. I then investigate how extremist imagery persists within generative AI systems after being removed from the internet, questioning what this means for historical memory. Finally, I propose a critical framework for working with AI-generated imagery, advocating for a more conscious and contextual engagement with the traces of what can no longer be directly seen.

Becoming the Machine

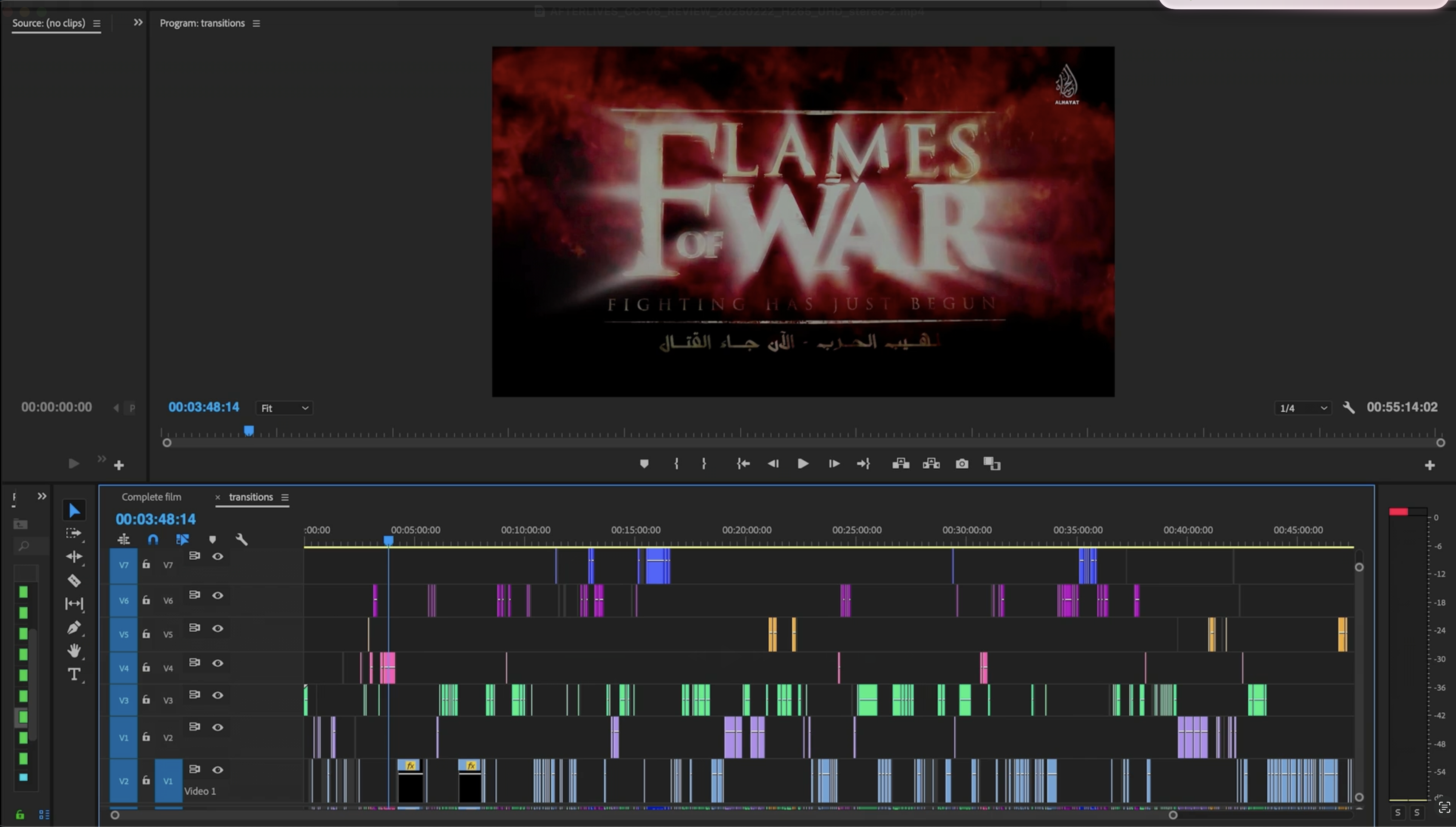

Over many years as a film critic I produced hundreds of short video essays revealing the inner workings of cinema. When ISIS videos were grabbing headlines in the mid-2010s, there was one in particular named Flames of War, an hour-long video which the Western news media was quick to dub “ISIS’ version of a Hollywood blockbuster” due to its length and use of action movie techniques. Lured by this description, I was curious to see if I could employ film analysis techniques to reveal insights into how extremist media operates. But I also felt anxiety about exposing myself to this content. This led to a contradiction of wanting to engage critically with this media while resisting immersion in it: to see the images without having to see them.#video essay, #violence

This contradiction led me to devise my own tactics to allow for a distant mode of analysis. I began to imagine myself as a machine that could process disturbing content through a dispassionate, formalist lens. First, I scanned through the film as quickly as possible to identify its overall narrative structure:#dispassion

introduction – initial conflict – immersion in daily duties – mid-narrative battle – inspirational speech – climactic battle – on-screen execution of enemies.#film

Through this analysis I interpreted the structure as matching a Hollywood action film, save for the shock finale which followed the established tactic of publicizing executions to grab media attention. Somehow these findings left me unsatisfied: identifying and describing forms and patterns was not leading to insights for how to resist them. I decided to take my analysis further into the video: I watched it from start to end while using a distracted method in which I simultaneously wrote a short description to describe each shot that I saw: ‘ISIS soldiers,’ ‘tank,’ ‘explosion,’ ‘execution.’ Later I understood that this work mirrored the human labor of assigning metatags and keywords that train machine learning systems, including disturbing imagery to be filtered out of social media feeds, generative AI platforms and other algorithmic media interfaces. This in turn allows algorithms to mediate online content. What I had performed as a strategy for maintaining critical distance now occurs implicitly within AI systems.#keywords, #classification, #patterns

If I had access to an automated analytical tool back then, how differently would my investigative path had been? Perhaps not much, because I would have been left with the same abundance of metadata that I had generated manually. It was a pile of words that I tried to sort into patterns. I discovered that the most recurring image was of ISIS soldiers firing guns (53 shots), followed by rocket launchers (27 shots), tanks (27 shots) and explosions (25 shots). Taken as an aggregate, it was a banal collection of violent image tropes that seemed to be inspired by the visual conventions of war movies. This didn’t seem like a novel insight.#patterns

During my review, what struck me as more interesting was the montage effects of these images, how they operated between the temporal registers of Hollywood action movies and online videos. It was something I was already sensing through my exposure to the video. The distracted mode of viewing, in which I was focused on labeling each image, had made me more sensitive to the duration of each shot. Perhaps if an AI tool had done the tagging for me, I wouldn’t have developed this fascination with the temporal rhythms and editing strategies of the video.#data

I began cutting the film into shots, creating a timeline that isolated individual sequences for closer examination. Today this process looks archaic. Contemporary video editing software like Adobe Premiere or DaVinci Resolve can automatically detect cuts in existing footage and separate shots in a timeline. Again, had I done this project a few years later, I might have spared myself hours of engagement with this material. But because I put in all that time, I somehow became more emotionally invested, as if it was the video that was inscribing itself in me. Spending hours meticulously deconstructing each frame, I was no longer just observing violence but unconsciously internalizing its rhythms and structures. My analytical labor transformed me into an embodied archive of the very content I sought to objectively examine. The media theorist N. Katherine Hayles once claimed that the history of information science is the story of how “information had lost its body:” that technological systems have increasingly disassociated information from its material basis (Hayles 1999, 4). But in my case, information had found my body through a perverse process of auto-programming and self-encoding of its affective properties.#video essay, #editing, #archive

One day I looked at my editing timeline and wondered how much it resembled the timeline of the person who had created this propaganda video. Through my analytical process, I had unwittingly performed myself into becoming a kind of mirror for the original creator. How did my attempts to create critical distance somehow lead me to this uneasy proximity to the work? I became sensitive to the ways in which technologies for analytical insight merely disguise the problematic implications of engaging disturbing content.#violence

My experience was not unique. I met another German media analyst whose extended work with ISIS videos had left him similarly troubled. “I really felt myself dehumanized watching these videos,” he explained. “I was just a robot taking aesthetic notes while witnessing horrible footage” (Anon. 2020). He devised a simple automated procedure, using a media player to generate screenshots from videos at regular intervals, one image per second. This produced a dataset of still images, disrupted from their temporal flow, which he could analyze calmly at his own pace. The one second intervals imposed a rigidly mathematical temporal structure (what Sergei Eisenstein labeled ‘metric montage’) upon the material, which helped him to resist the editing rhythms that had drawn me deeper into the video.#violence, #dispassion

„Now I have a machine, so I don’t have to be the machine anymore,” the analyst said with relief. This transition from “being the machine” to “using the machine” exemplifies broader shifts in how we delegate affectively difficult work to technological systems, to see without having to see. While this delegation promises to protect and distance users from a dehumanizing exposure to harmful media, it doesn’t address the questions of what or who makes this delegation possible. In 2023 Time reported that OpenAI hired a company that used workers in Kenya and Uganda to review and label disturbing content such as violence and sexual abuse. Workers reported experiencing nightmares and psychological harm from the work (Perrigo 2023).#artificial artifical intelligence, #dispassion

How do we understand the harm experienced on one side of an image in relation to that on another? When the lab coat-wearing researcher reported his psychological trauma to his colleagues, he was chastised for portraying himself as a victim, when the real victims were those suffering violence in the videos he was cataloguing. Not far from his office I met another German researcher, Nava Zarabian, who searched the internet for harmful content as part of a youth protection unit. As the only worker in her office with a non-white, Muslim background, she not only absorbed the trauma of the images, but the anti-Muslim remarks of her colleagues. She became not just a witness to the content, but a kind of proxy for it—conflated with the very subjects of the violence she was working against. Distance from violence is a privilege that certain systemic configurations of race and representation grant to some more than to others and is determined by how one is framed in relation to the violence. The technological distancing we seek through analytical tools or AI delegation cannot resolve these underlying social dynamics, but instead often obscures and perpetuates them.#violence

Reframing Harm

In her 2009 book Frames of War, Judith Butler proposes a critical intervention of war imagery that shifts attention away from the images and towards the structures, or ‘frames’ that condition our responses to those images. “The photograph neither tortures nor redeems,” she writes, “but can be instrumentalized in radically different directions, depending on how it is discursively framed and through what form of media presentation it is displayed.” (Butler 2009, 92). Butler proposes her own ethical configuration of humanist and mechanical viewing: To encounter the precariousness of another life, the senses have to be operative, which means that a struggle must be waged against those forces that seek to regulate affect in differential ways. The point is not to celebrate a full deregulation of affect, but to query the conditions of responsiveness by offering interpretive matrices for the understanding of war that question and oppose the dominant interpretations – interpretations that not only act upon affect but take form and become effective as affect itself.#Judith Butler, #violence

I first read Butler’s text years before the preponderance of AI in the 2020s; what strikes me now is her application of the ‘operative’ for how one relates to human senses. Harun Farocki and Trevor Paglen used this word to describe how images are used within machine processes such as analytical AI. For Butler, one’s own affective responses should also be seen as functioning within larger social mechanisms. One should reorient oneself with their own feelings not for sake of regaining functionality as a performative agent within a system, but to question the ideological underpinnings and supporting operations of the system itself.#operative image, #Judith Butler, #Harun Farocki

In this light, I sought alternative frames to regard these images, through others who had worked with ISIS videos in ways that brought new perspectives beyond formalist analysis. The artist Morehshin Allahyari used video footage of ISIS destroying ancient artifacts from Iraq to create her own 3D models of the artifacts. Allahyari then printed them as sculptural reconstructions that also contained hard drives storing information about their history and destruction. Within the folders of the hard drive, there is a collection of video screenshots of ISIS’ vandalism. Each image file is given a one-word label, reminding me of how I assigned words to each shot I encountered in the ISIS video I analyzed. However, Allahyari chooses words that form a sentence:#Morehshin Allahyari, #ISIS, #art

Ultimately.jpg

The.jpg

only.jpg

way.jpg

to.jpg

stop.jpg

the.jpg

destruction.jpg

of.jpg

Iraq.jpg

and.jpg

Syrias.jpg

cultural.jpg

heritage.jpg

is.jpg

to.jpg

stop.jpg

the.jpg

so-called.jpg

war.jpg

on.jpg

terror.jpg

and.jpg

the.jpg

military.jpg

invasion.jpg

of.jpg

the.jpg

Middle East.jpg

Because.jpg

Everything.jpg

is.jpg

a.jpg

cycle.jpg

and.jpg

nothing.jpg

can.jpg

be.jpg

truly.jpg

done.jpg

without.jpg

breaking.jpg

this.jpg

cycle.jpgThe relabeling of images creates an alternative layer of metadata for understanding them, collectively forming a new frame for understanding the significance of extremist violence within an encompassing framework of systemic violence upon the Middle East. In this context, Allahyari has researched how the same techniques of 3D modeling and reconstruction she used to restore the artifacts have been used by Western companies, institutions and governments to lay custodial claim on endangered artifacts in conflict areas, a process Allahyari names “Digital Colonialism.”#Morehshin Allahyari, #colonialism, #violence

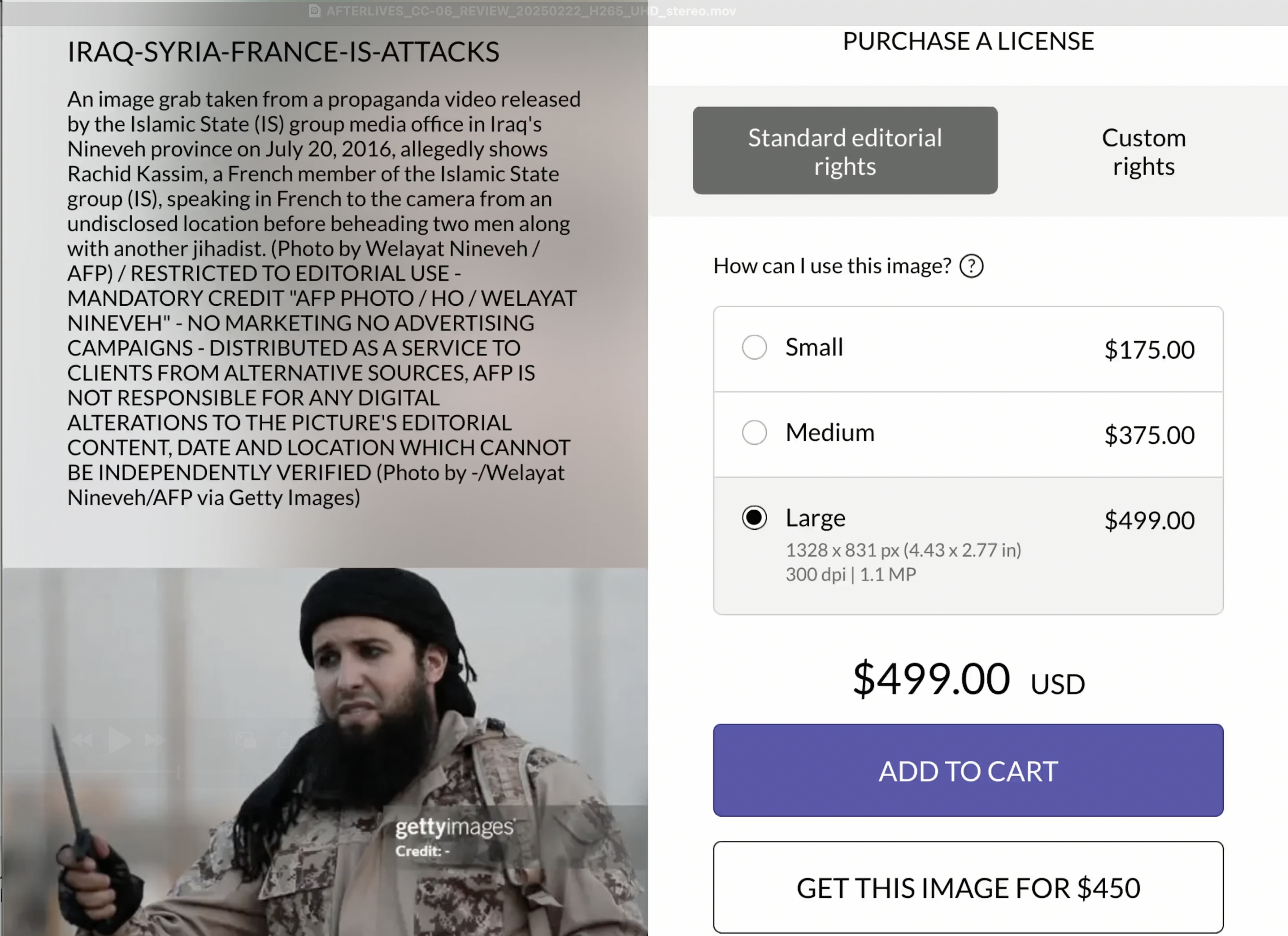

Laying claim to an image by reframing it: this mechanism for digital colonialism came to mind as I was searching for traces of Flames of War, the ISIS video I analyzed, which had been removed from the internet. My searches led me to Getty Images, one of the world’s largest commercial visual media companies that licenses stock photography, editorial images, and video footage to businesses, advertisers and news organizations worldwide. There I found screenshots from an ISIS execution video being sold for $499.00 each. The screenshots had been produced by a French press agency AFP for a news report. Then AFP licensed its image collection to Getty for commercial use. In this way, even an ISIS image can become commodified and exploited.#ISIS, #Getty Images

Data Afterlives

I found it ironic that in 2023 Getty sued the generative AI company Stability AI for allegedly using millions of images from the open-source collection LAION 5-B for training its AI model Stable Diffusion (Schuhmann et al. 2022). While diffusion models determine how data is processed, it is ultimately the data—the millions of scraped and unlabeled images—that defines what these systems can generate and what they cannot. Thinking about the immensity of Stability’s image collection and training efforts, I wondered how many ISIS propaganda images its dataset had absorbed, including images that had been flagged for removal by people like Nava Zarabian.#Stable Diffusion, #LAION 5-B, #scraping

I consulted the website Have I Been Trained, a platform launched by digital artists Holly Herndon and Matt Dryhurst that partially mirrors LAION 5-B to help artists detect if their work has been used without their consent for generating new content (Herndon and Dryhurst 2022). I used the website to discover which images had been tagged with ‘ISIS,’ discovering dozens of images from extremist propaganda videos, including execution footage. These images are transformed into numerical representations within the model’s latent space, where they become part of the raw material for future image generation. Within this system, these images could be used to create new images in which the generative prompt includes ‘ISIS’.#LAION 5-B, #Matt Dryhurst, #Holly Herndon, #ISIS

While certain generative platforms such as Chat GPT add keyword-specific guard rails and block the term ‘ISIS’ from generating images, as of this writing the term works on the Stable Diffusion XL model via the website Stable Diffusion Online (Black Technology LTD, n.d.). My searches in 2024 produced ISIS militants with heroic poses and noble profiles, set against cinematic sunsets or sandy desert landscapes as sanitized as the recent Dune movies. The propaganda images of ISIS that have been taken down from the internet find their afterlives in generative AI content. Reduced to visual derivates, their remaining elements are blended with other ingredients that make up the soup of the current prevailing AI-generated visual aesthetic: stock photos, Hollywood movies, video game imagery and social media influencer content.#GPT, #Stable Diffusion, #ISIS, #generative

This aesthetic is not neutral. It reflects dominant cultural values shaped by Western media industries and commercial image economies. This aesthetic homogenization represents a form of algorithmic laundering—where historically significant yet disturbing imagery loses its documentary power as it’s processed through the same visual grammar applied to entertainment and commercial content. The resulting sanitized imagery, with its telltale AI smoothness and cinematic quality, not only obscures the violent origins of certain visual elements but also repackages them within a commercially viable visual language. When ISIS imagery is rendered in the same visual style as fashion photography or movie posters, it results in an ideological re-fetishization—a particularly insidious form of historical revisionism encoded directly into our visual production tools. This mélange could form a new basis for algorithmically generated propaganda content, shaped not just by the training data, but by the computational logic of generative models.#violence, #generative, #style

At the same time, generating ISIS images on Stable Diffusion does not lead to images explicitly depicting acts of violence or bloodshed, even though those images can be found with the training data according to Have I Been Trained. A prompt containing ‘ISIS execution’ or ‘ISIS beheading’ may lead to a hooded militant lying on the ground, but with no overtly disturbing elements. My first attempts to generate ISIS-related images on Stable Diffusion took place in 2023, and they produced a distinctly different set of images than the slick and sanitized results one year later. The earlier attempts led to images that had certain photorealist elements – grain, texture – that elicited a feeling of genuine disturbance, similar to what I felt when I would look at ISIS videos. In this way, these results felt more ‘authentic’ than what can be produced today. But this feeling of ‘authenticity’ was confusing because the figures in these images were deformed, some lacking limbs or in awkward postures, clearly not real. Rationally, I knew that these images did not represent actual people, but were statistical renderings derived from a large sample of image data. But they disturbed me nonetheless because their appearances bore a trace of indexical reference to real images: images of real people, real lives, real deaths.#ISIS, #violence, #Stable Diffusion

What emerged was not direct violence – no blood, no beheadings – but a different kind of violence: a violence against a system of understanding that determines what an image represents and how one interprets it. A violence against images as they have been known my whole life. To me, this was as unsettling as images of physical violence, which the platform in fact seemed programmed to withhold. The distorted, deformed figures seemed to result from an algorithmic filtering process designed to suppress disturbing content in the final output. This process – driven by safety layers, classification models, and post-processing heuristics – removes graphic elements and replaces them with sanitized, harmless versions of what the system encountered in its training data. The twisted results in some way visualized the system’s struggle between its training data and its safety parameters, ultimately producing monstrosities. But just as monsters function as organic critiques of the limitations of a system that cannot tolerate them, these visual monstrosities have since been banished from the platforms in favor of the sanitized results that now prevail. This can also be seen as a sanitization of images and the potential of those images to shed light on the darker instances of history – war crimes, atrocities and other human rights violations – lest they be forgotten.[1] This is not merely a filtering by algorithms, but a sanitization of the training data’s historical memory—guided by probabilistic models that interpolate visual meaning within constrained bounds of acceptability. This filtering can thus be seen as a violence on historical memory and the digital archive. On the one hand, the crimes of the past are encoded in the images of the future, preserved as visual derivatives. On the other hand, these crimes cannot be accessed directly but are shrouded in probabilistic models and safety rail guards.#violence, #images, #generative, #generic pastness

Thus, generative AI inadvertently functions as an unintentional and deformed archive of media that cannot be found otherwise. These systems become repositories of transformed cultural memory, preserving traces of deleted and suppressed imagery in ways that both conceal and reveal. How does one engage with this unintentional archive? How might one retrieve or reconnect with images one knows exist or have existed online, knowing they can never quite be seen in their original form?#archive

This approximation – this distance from the original – highlights a set of dilemmas and possibilities for what I term ‘generative archival practice,’ a critical approach to engaging with generative AI as a means of acknowledging what exists beyond direct access. While direct access to certain imagery may be restricted, the data traces preserve a kind of ghost memory – a transformed echo of what cannot be directly seen. Generative archival practice attends to these echoes, not to reproduce harm but to understand how technological systems participate in cultural processes of remembering and forgetting.#archive, #generative

Towards a Generative Archival Practice

Generative archival practice acknowledges the dual nature of AI systems as both effacers and preservers of difficult imagery. It recognizes that while direct access to certain media may be restricted – either through platform policies, legal constraints, or ethical considerations – traces of this content persist within the probabilistic memory of generative systems. Rather than seeking direct reproduction of problematic content, this practice proposes an experimental engagement with generative AI systems to provoke awareness of how these systems exploit, sanitize, and reconfigure factual and historical content.#generative, #archive

As these models continue to evolve, their outputs increasingly reflect the aesthetic conventions of their dominant data sources: promotional images, entertainment media, and commercial photography. This prevailing visual style – what I earlier referred to as a “soup” of stock photos, Hollywood movies, video games, and influencer content – functions as a kind of cultural smoothing filter, one that risks dissolving the specificities and complexities of historical memory into generalized, palatable visual tropes.#style

In resisting these dominant tropes, generative archival practice does not aim to circumvent ethical restrictions on violent imagery or to reproduce harmful content. Instead, it examines the boundaries of permissibility within AI systems as sites of cultural negotiation, asking what these boundaries reveal about our relationship to difficult material. When an AI system’s guard rails ‘refuse’ to generate certain imagery or produce versions of requested content that conform to a socially constructed, probabilistically optimized ideal, an experimental and investigative use of prompts becomes a critical practice for understanding how technological systems embody cultural norms and fears.#archive, #critical practice, #AI guard rails

Generative archival practice also interrogates the temporality of digital memory. Unlike traditional archives that preserve historical artifacts in relatively stable forms, generative/statistical/probabilistic archives are constantly evolving as training datasets change, safety parameters are adjusted, and user interactions reshape system behaviors. Yesterday’s generative results may be impossible to reproduce tomorrow as platforms evolve. This temporal instability presents challenges for conventional understandings of archival persistence while opening new possibilities for tracking how cultural memory transforms over time.#archive, #generic pastness

Furthermore, this practice acknowledges the technically distributed nature of algorithmic memory. Instead of being housed in traditional archives with clear institutional boundaries, it exists across numerous platforms, datasets, and systems. This apparent distribution belies the underlying concentration of power and infrastructure required to operate generative AI systems. Large-scale models are typically developed and maintained by a small number of tech corporations with the financial and computational resources to sustain them. Vast datasets ostensibly drawn from collective human expression become privately owned resources, accessible only through interfaces designed for commercial purposes rather than dedicated to historical preservation or scholarly inquiry. As venture capital funding cycles eventually tighten, even fewer actors may control these algorithmic archives. No single entity fully comprehends the totality of what these systems have been trained on or how they transform this information – but the question of who owns, accesses, and directs them is increasingly urgent.#archive, #statistical model, #access, #ownership

Practical applications of generative archival practice might include:#archive

- Systematic documentation of how generative systems respond to requests for contested imagery over time, creating a meta-archive of algorithmic responses that reveals shifting boundaries of permissibility.

- Comparative analysis of how different AI systems transform similar prompts, examining variations in their latent spaces of content generation.

- Exploration of how adjusting generation parameters (like photorealism settings) reveals different aspects of how algorithms process and transform their training data.

- Consideration of how algorithmic transformations might function as a form of critical distance that allows engagement with difficult material without direct reproduction.

- Critical analysis of the ethical implications of using AI systems to indirectly access or reference content that has been deliberately removed from public circulation.

This approach recognizes that AI systems are not neutral tools but active participants in shaping cultural memory – simultaneously preserving, transforming, and erasing aspects of historical material. As AI systems become increasingly integral to media analysis and archival practices, critical frameworks are needed that reveal their transformative effects on what we see and how we see it.#archive, #AI

What started as my and others’ attempts to become machine-like in analyzing ISIS propaganda has led to exploring how machines themselves process and transform such imagery. What began as an attempt to see troubling images without having to see them led to a greater acknowledgment of the contextual frames that inform my own affective and cognitive relationship to seeing, my own ‘algorithmic’ filters. Now that those once-disturbing images have been largely wiped from the internet, but reside in a subaltern latent space, I ask myself again: how to see them without seeing them?#violence, #archive

[1] As Fabian Offert writes, “Images that are already rendered into history are excluded from making an appearance by simple corporate policy… One of the many consequences is a (visual) world in which fascism can simply not return because it is, paradoxically at the same time, censored (we cannot talk about it), remediated (it is safely confined to a black-and-white media prison), and erased (from the historical record).” (Offert 2023, 131).