It is often said that generative AI is conservative, even reactionary, that it can only recreate versions of the past, contained in its training data. That data, it is also regularly pointed out that, contains mainly historical biases which generative AI reproduced and amplifies. Both of these claims are correct, and trigger calls to make AI more fair, accountable and transparent. In my talk, I want to shift away from such questions of representation, and focus on the generative dimension. Generative AI produces “unreal data” that is, presentation of things that do not exist, but might come into existence based on that data. They are premonitions of worlds to come. Thus, the question we need to ask, is perhaps less if the images (videos, texts, and sounds) are correct or fair, but whether the worlds they envision are desirable.

Read full transcript (generated by Whisper)

Good morning, everyone. It seems to be that nine is the new ten at ArtSchools. Thank you, Hito and Francis, for putting everything together, and to Anja for the excellent organization. I feel there's a great urgency to what we are trying to do. Since I have the privilege to go first, I will lay out a fairly broad survey of issues that might help us to to find some of the questions to which AI is proposed as an answer. And I'm sure we'll go deeper in many of these issues as we go through today. But I think it's important to understand that AI is not an isolated phenomenon. It's not an application here or there, but part of a large scale transformation. In a similar way that the Internet is not an app on your smartphone, but a constitutive and constituted part of today's reality that extends from your inner life all the way to the outer reaches of the cosmos. So let's get started. A quick overview. Overview of what I'm trying to do in the next half hour, 40 minutes. I want to talk about generative artificial intelligence. I will focus on the generative part.

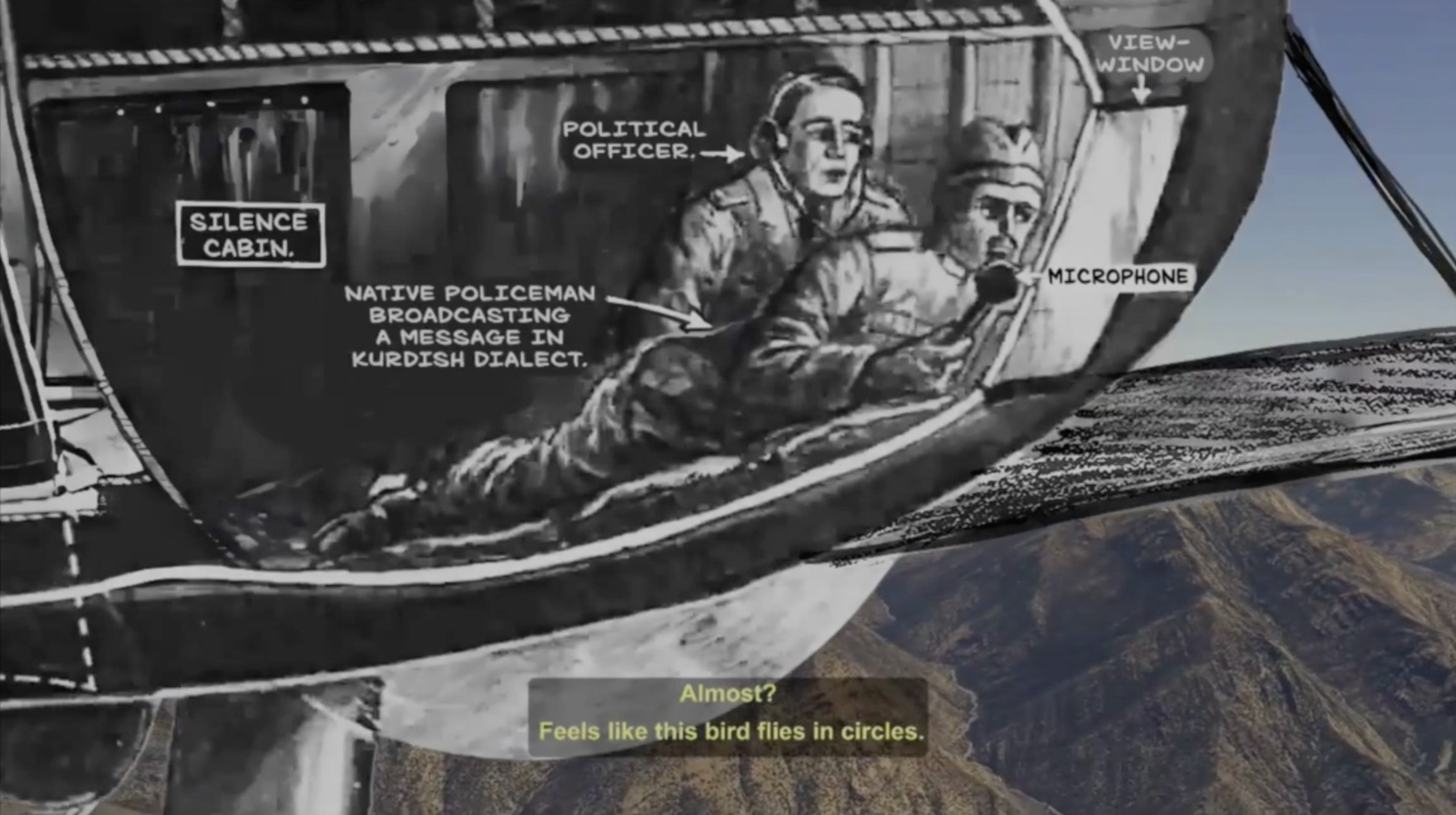

Then I want to look at what is actually being generated at the level on three levels. On the level of content, the shrimp cheeses, as we have seen or heard about, on the level of political economy. That's perhaps the level that Palantir is working on. And by the way, Palantir, as you probably all know, is not just in Ukraine and in Israel, but also here in Bavaria and in many other places in Germany, assisting police efforts. Not anymore. So they got. They stopped that. And the third level I want to talk about is the level of ideology. So beyond its immediate application, with all the visions, the desires driving that field. And then I end my presentation with mentioning a few of the artistic positions that are interesting ways to intervene, or think about these different levels. From my own point of view. So whenever we talk about AI, there's a danger of talking about everything and nothing because the term, as we all know, is so imprecise. So let me be a bit more specific about what I mean here. I do not talk about the. I do not talk about the artificial part because the distinction between the natural and the artificial really makes no sense.

As we know from every culture other than Western high modernity, natural, artificial, nature, culture, these dichotomies don't help. On the contrary, they make it really hard to understand what's going on. And they will also not talk about intelligence because because that term is even worse. It is at best a marketing term invented for a grant application in 1956. And at worst, it serves to drown out the relevant issues in a tidal wave of shallow philosophy and empty spirituality. So I really want to talk about the generative part. And for this, I try to distinguish between what I call quite roughly and probably not or not very precisely. The distinction between generative AI and analytical AI. So generative AI is really AI that generates that fabricates things that does these unreal creations, as I call them in the title things according to its own criteria. On the other hand, what one might call analytical AI is really something that transforms. An input according to some transparent criteria. The focus between other distinction between the two is more an epistemological than a technological issue. Put simply analytical AI can be incorrect to various degrees while generative AI cannot.

It's really really impossible to produce the wrong image in any meaningful sense of correct or incorrect. And if you know an example of an analytical software or an analytical application of AI that has a relationship between input and output that, you know, humans can evaluate would be a translation software where you see quite well whether the translation is a good one or a bad one and you can correct it and so on. And on the other hand, we have text generation. Which really is just more or less interesting or not interesting at all or, you know, appropriate or inappropriate. But it's really no real way of saying that whether it's correct or not. These the distinction between kind of analytical and generative are not hard criteria, but they serve here as a rough heuristic. There's a lot of gray zones and the same thing can be analytical or generative depending on how you use. You can use chat GPT. For translation, which would be a more analytical. Use and we can quite understand quite well understand what it does, even though we don't know the mechanics of how it's being done. But we understand kind of the process.

We understand the process of analysis of big data. It's something that is not not, you know, hard to understand in a way, but we really do not know the process. Of generation, even though we have some technical sense of what it is, but that there's no cultural equivalent to that. So if we want to focus or if I want to focus here on the generative, I really want to wonder what is actually being generated here. And as I said, I have three levels content, political economy and ideology. And let's look first at the level of content. Generation. These are among the favorites. My favorite images generated so far and I'm sure many of you have seen them. Here we have people of color as Pope's. There were also people of color as Vikings and God forbid people of color as Nazis. It feels like ages ago and I looked it up when it actually was. I was surprised that it was in this year's or is just in February this year. And but that perhaps helps us to remember that it was a real shitstorm about these these images and a lot of pious hand-wringing about historical truth and that we all lose our sense of history and so on.

Which was quite surprising in a way because of the years and years of talking about bias and criminal and discrimination through AI and the shit really hit the fan when white people started to. When white people started to. Feel discriminated against which of course there's a lot about the discursive power and the insecurity of this particular class of people but not so much about the particularities of of these images. Because. You know we can. Debate the historical veracity of many images. They are actually images. Of. Female of a female Pope Pope John. Who. Is assumed position of the Pope in the late nineteen and ninth century. Only to be exposed by a sudden childbirth during a procession. Today we think of this largely as a myth as a story invented in the thirteen fourteenth century. Probably by Boccaccio who first wrote it down. But at some point there was a statue in the cathedral of Siena among the kind of historical processions of popes that depicted her. So there are precedents of images of. You know female Pope. But in the in the case of these AI generated images the questions of. You know veracity.

And of representation does it actually. Represent something doesn't really help us at all. A picture of a pope that looks more like the cliches of a pope that we expect the old white man with some sort of. Beatific smile would not be any more historically correct than the pope as a woman of color. These images they don't really refer to to anything outside of themselves. As you know all of my I talked about this. Quite a bit. So classical photo image theory with questions of representation indexicality of framing. Doesn't really help us here. If you use another generative AI program to enlarge the frame to change the angle of perspective. You know approaches. You know. Critical to classic image analysis. You know how would the scene look from another point of view. What do we not see in this particular frame. So we actually not really seeing any more by applying these. Categories. So these images are not documented something that exists in real space. On the contrary they're really unreal in the sense of not representing anything. Rather these images show a world that does not exist but given the past and the present.

And that is unthinkable and could therefore exist. These images show something virtual in the classical sense of something that is possible. But perhaps not yet actual. What we are seeing here is a single point in the latent space and the latent space is the past organized as a present which contains all possible futures. We know in. Very general terms how these images come together on the one hand there is an image analysis of patterns in portraits. And of images in Pope's concentrating on certain patterns that exist across a relevant category of images recreating these patterns with some degree of randomness at various points of the process is what creates these images that kind of takes up these patterns. But varied them in and kind of unpredictable ways. But using only these kind of techniques of of kind of pattern analysis. It is very unlikely that we would have ever seen the images that we are have been presented as you know Pope as people of color since the subset of images. Since the subset of images. That are a portrait a Pope and a woman is are statistically insignificant. But as we have seen they're not zero.

But no commercial generative AI really works like this by just analyzing patterns in in data. They all have what is often referred to as guardrails which can which can be either normative borders that cross off certain sections of the latent space. That are deemed indecent or not good for business or kind of offensive to certain values. Or they can they can appear as altering weights that change some of the patterns into something that is more desirable than what is actually contained in the training data. So with the images of the Pope as person. As a person. As a person. Of color. We see an example of trying to correct against the bias in the training data that over represents certain groups of you know white people from America. And this is actually you you you can see in these images very good these two these two kind of structures at play. So from the patterns and the training. Data we get the kind of an implicit bias of of the distorted way realities represented in the training data and from the norms and guardrails we get kind of an explicit bias about what the people that are employing these technologies actually want it to produce and the kind of reality they want to envision.

So every image that we see is a highly situated production. So every image that we see is a highly situated production. Bearing witness to the historical political nature of the data. And the values and interests of those who employ it. This makes the boundaries between what can exist and what should exist extremely blurry. There is no image that is not normative in this way. And this is absolutely unavoidable. Even if you were simply using existential. relevant patterns. and say no guardrails, no norms, then we would have a very strong normative position that says let's propagate the bias that is already contained in the data. So even doing nothing is a very strong position here. And this is actually not necessarily a bad thing. Right? And if we see an image, a generated image as a world to come, then the question of how does this world come into being is actually, and what kind of world is it, is actually an important one rather than whether it's correct or incorrect. And this is really an indication that we have to do it with the actual generative process, with the process that makes decisions about the world.

We have to think about what it generates and what it wants to produce. So this means we really should not ask whether the image is correct, but if we want the world that the image brings into being, whether we want that world to exist. But of course, it is not just content that is being generated. It is not just what happens in the screen or on the screen, and whether we like what we see or don't like what we see. So let's flip the perspective here from the figure that is the image to the ground from which it emerges. And the ground exists or consists of a massive and massively centralized infrastructure. In the West, there are only four or maybe five main companies that really make up this ground. So we have Microsoft, Amazon, Google, Meta, and perhaps even Apple. What we see here is really a black box. It's a black box technically. We know this about the difficulty of understanding what happens inside the neural network, but it's a black box legally. If you cannot enter these places, you have to sign non-disclosure agreements. And so on. This is a black box organizationally.

If you ever try to enter Google, you will notice this. And it's a black box architecturally. If you go and look at the data center, there's really very little to see, and this is quite deliberate. But this infrastructure really is an enormous concentration of power. It's a concentration of making decisions about the things that can exist. And it's creating dependencies and extracting from values of everything that runs on top of this infrastructure. So to see in this way, AI and here it's both analytical and generative, is really the next iteration of the internet. It's an essential infrastructure likely to be present everywhere. If you want to make periods, you can say that from 1980 to maybe 2000, we had a decentralized infrastructure and decentralized communication. Then up to 2020, perhaps, we had a centralized infrastructure, social media, but still quite decentralized communication. We talking to our friends on it. This was the age of platform and platform economies. And now we have both a centralizing infrastructures, and centralizing or centralized communications. And all the big companies that understand this intimately, because they are the infrastructure providers that drove this first wave of centralization, and now driving the second wave of centralization.

Again, perhaps with the exception of Apple. So we can conclude here that the attention economy, is over, it's no longer about shaping preference, what Shuboff called behavioral modification, but really shaping existence, shaping future pathway. Perhaps the prototypical application of generative AI is sorting through job applications, and what is generated here is not an image, but your life chances. So this is a classical political economy analysis about the distribution of power, about the control over the points where most surplus value can be extracted. And the question to which generative AI is the answer from this point of view is, how to centralize power and wealth? It's a technology of extreme inequality. It's a technology, as Dan McQuillan said, of austerity and of remote, remote administration. And we see that quite clearly in kind of the way the techno culture in Silicon Valley is moving away from, you know, let's say liberal democracy, and towards a different degree of authoritarianism, be that fascism, be that neo-monarchism, be that kind of the network state, or all kind of ways. I'm sure we hear about this later. So what we see is the political economy of the growth of hyperscale data centers, larger and larger infrastructures.

Microsoft invested almost 6 billion in data centers in 2023 and has increased in investment to almost 24 billion in the first three quarters of this year. So this is a really, really, really, really, really, really, really big industrial scale investment. And in connection with open AI, there's even a talk about a hundred billion data center to build a supercomputer for generative AI with the code name Stargate. This is an important name. So this sets off a neo-colonial scramble for resources like mineral components such as chip, but also land, exploitable labor, relegated to ghost work, to water and energy. And I want to focus on the energy part very briefly. We see here quite a typical chart of the electricity demands. And so by 2027, in three years, there's that expectation that the demands of AI will outpace that of the UK. And the UK is the 15th largest energy, user in the world. So we're not talking about small things. And usually this is, you know, with hand wringing and no, but this won't happen because we have efficient technologies and so on, but it's actually a part of thinking about the energy use that really likes this idea of more energy, where this is not a bug, but a fluke.

And so we're talking about the future. And this is what the last point I want to mention is, you know, generates an ideology. And it's an ideology in a sense, it's got a logical desire. So a desire towards, you know, an end time, a desire towards a kind of a fulfillment of an ultimate destiny of humankind. And so, you know, I think that's a very important point. And there is a lot of religious desires in technology, being all knowing, all powerful everywhere at once, you know, immortality and all of that. So we have all these religious ideas being, you know, expressed as science today, and this is nowhere more visible than in the idea of, you know, in the field of generative AI. And I want to, you know, come to an end by playing a very short clip of an interview given by Sam Altman of OpenAI earlier this year in Davos of all places, where he talks about energy. Do need way more energy in the world than I think we thought we needed before. My whole model of the world is that the two important currencies of the future are compute slash intelligence and energy.

You know, the ideas that we want and the ability to make stuff happen and the ability to like run the compute. And I think we still don't appreciate the energy needs of this technology. The good news to the degree there's good news is there's no way to get there without a breakthrough. We need fusion or we need like radically changing, you know, like radically cheaper solar plus storage or something at massive scale, like a scale that no one is really planning for. So we, it's totally fair to say that AI is gonna need a lot of energy, but it will force us, I think, to invest more in the technologies that can deliver this, none of which are the ones that are burning the carbon, like that'll be those, all those unbelievable number of fuel trucks. And by the way, you back one or more nuclear. Yeah. I personally, I don't think there's anything like that. I personally think that is either the most likely or the second most likely approach to power in the world. Do you feel like the world is more receptive to that technology? Now, certainly historically, not in the US.

I think the world is still unfortunately pretty negative on fission, super positive on fusion. It's a much easier story, but I wish the world would embrace fission much more. Look, I may be too optimistic about this, but I think, I think we have paths now to massive, a massive energy transition away from burning carbon. It'll take a while. Those cars are gonna keep driving. There's all the transport stuff. It'll be a while till there's like a fusion reactor in every cargo ship. But if we can drop the cost of energy as dramatically as I hope we can, then the math on carbon capture just so changes. I still expect, unfortunately, the world is on a path where we're gonna have to do something dramatic, with climate, like geoengineering as a bandaid, as a stop gap. But I think we do now see a path to the long-term solution. So the world here is composed of two things, information and energy. Matter doesn't matter only in as much as it provides information or energy. And we need more energy, period. There's no question about it. And it's actually a good thing because AI forces, and it's a good thing.

And enables us to tap into new sources of energy. So AI is both the problem and the solution. So that, yeah, we can make an argument that there's always been a relation between the amount of energy a culture controls and the things it can achieve. There's a clear relation between fossil fuels, artificial fertilizer, and the number of people who can be fed on the world. But this is really not what is meant here. This is really not what is meant here. This is really not what is meant here. This is really not what is meant here. This is really not what is meant here. This is really not what is meant here. What is meant here, and I'll leave it at this, is really something cosmic. It's really an idea of reaching a whole different level of civilization, one that is able to not just control everything on the world and therefore use, at a future point, geoengineering to fix the problem, the problems that we do now, but really extend itself onto the, you know, beyond the planet. And we've seen that with, you know, all the space programs that are funded, you know, with this money that is made here from this particular political economy that I mentioned.

But we also see it in things like the name of this new, super computer that Microsoft and OpenAI want to build for $100 billion called Stargate. So it's really the gate to the stars that leaves behind the world as we know it and probably also us. And with that, I think I'll conclude. Thank you. So maybe let me respond first. Of course, we know those basically ideological demands to spend more energy from Silicon Valley cults such as effective accelerationism, which of course is not named after effective altruism for no reason. There are people like Hume Verdon, who is the main propagandist, let's put it like this, of this cult, who says, well, we need to spend more energy on the universe. And I think that's really that one needs to spend energy not to power AI or to do any other mundane stuff because the cosmos, the universe wants us to spend more energy. This is what the universe wants and this is why basically we need to consume more energy in order to accelerate entropy, in order to help the universe to fall apart in total entropy, which is apparently what the universe wants.

So there are already basically ideological formations that articulate the things that you have said in almost shocking clarity and in unequivocal terms. Let me ask you something very mundane maybe. The way you started the talk by basically talking about unreal data or generative data. And I think that's very interesting. And I think that's very interesting. I think that's very interesting. I think that's very interesting. I think that's very interesting. I think that's very interesting. I think that's very interesting. It reminds me of an idea that's already been articulated by William Flusser in the 90s or in the late 80s, then redeveloped by Farrokh. And those guys said that at some point, in the 90s maybe, images stopped being representations and started being projections. So you would basically, the image would be a project, something that is facing towards the future and articulating a sort of, you know, ideal state instead of a representation. And I was asking myself, first of all, how does the generative process, which you are describing, how is it different from this early Flusserian description of projecting something? I think the main idea is the same in terms of that it tries to envision a future that it then can, you know, once it's articulated, can try to work towards.

That will probably never get to this ideal stage, but you have a direction for it. The difference, I think, here is on the one hand, what is the idea? What is the idea? What is the idea? What is being projected? Kind of the ideal state that is envisioned? If we look at the, you know, the industrial technologies, you know, of the 80s and 90s, there's a very different world that has been envisioned. And one of the difference is that the vision was really geared towards the world itself and trying to control and organize and, kind of, make available the world as such. And what we are seeing here really is a vision that basically gives up on the world, that really is not interested in trying to, you know, to organize the world beyond what is necessary, you know, to have this transformative moment of, you know, acceleration of a lift off into virtuality, you know, to have this kind of, you know, the virtual reality into space, which I think is pretty much the same whether you live inside a computer or whether you live inside a starship is, you know, two ways of being inside a computer.

But the, kind of, the desire that animates it is not the desire of, kind of, industrial society at all. Yeah, so this was my second question. Is there still, if you say, on real days, or later could also be articulated differently? I mean, I assume that you also mean to spell out a different kind of project or utopia. Is that even still possible in the kind of overall infrastructure and political economy, which you have been describing? I absolutely think so. And also with these media or tools? I think it really depends a bit how you, kind of, frame these tools. I think one way that these tools can be used and are actually already being used is not to abstract away from material reality, but to provide us with a sense of a, kind of, our material planetary entanglements. This is something that is beyond our immediate, you know, senses and sensory, kind of, sense of the material. For example, if we use these means, then essentially we might use real limiting patterns to really bases or even subliminal patterns to be able to have some really extensive sense of data about material and human activity.

I think if a major human problem can actually be created, then it doesn't likely need to be against human beings and with sorts of kind of scientific Meltdown placed quien, or a�вид, know witnesses. So, yes, it's a, one of these kind of innovations. I think there has to be some settlement of these kinds of desires loves them, you know, in some kind of way that the intelligence and access Killer could then be합fulness. And so, in, you know, companies, his being chasing data that are both, and having and cultivating all these young people who figure oneself as empathetic, 조금 in our, in the, I guess a, So for me, we are at the point when it's clear that this linear industrial development has come to an end. The negative feedback loops are so strong that it's clear that it cannot be sustained. And there's one path that wants to use that as a liftoff into this virtuality. And this is kind of this massive hundred billion infrastructure data centers of Stargate. And there are ways of understanding these technologies to really get us a sense of a material understanding of the world we are living in and the things that we can and perhaps should not do within that world.

I don't think using chat GPT as your desktop calculator is a good idea in terms of the type of energy demands. It's not. It uses and that will never be helpful. To push it out into every nook and cranny as a very ineffective way of turning on the light switch at home is really kind of disastrous. But we need kind of complex analytical tools to understand kind of the geophysical moment we are in. No question. Thank you so much, Felix. That was great. And I really, really appreciate it. Thank you, Felix. You've done a great job. And I think we got a lot of questions that are really important, like your kind of mapping of the ideology, especially. But I wondered, and you already hinted at that, whether this ideology really is one or what is its function is. And maybe my suspicion is that it is kind of masking a very kind of hollow core that there is actually both in economical terms the real problem that there is no real use case for generative AI. No. Much more for analytical. generative AI in warfare, as Hito mentioned, but generative AI still is not a viable kind of, has not produced a product that actually makes money. And the answer is, yeah, scaling up, scaling, scaling, scaling, making more, making more data, making more data set, making more of everything, which is in a way it's an idea of making more of the same, which is also how these models very much function more and more of the same. And to kind of mask this up, there are all of these kinds of ideologies and eschatology and so on ideas of planetary, whatever, but do we have to take them seriously or would be the task to say, yeah, well,

you can tell us a lot, but in the end you don't have anything to sell to us, both in the forms of a vision and both in forms of, of an actual product. Isn't, isn't kind of a, a mask. Isn't of, of, of, of a lack actually of any ideological project really in the case of Sam Altman, for example. I think any ideology only becomes really powerful when it, you know, serves real material interests. So that is aligned very much with the idea of centralization, with the idea of massive scale infrastructures, with the idea of inserting the is technology into every point where value can be, you know, extracted and they do that quite successfully. I mean, these are really rich people and it's really rich companies and really powerful companies. Um, but I don't think, um, simply, um, you know, business cases, uh, are enough. I think there needs to be an overshoot of belief that carries you through long stretches of, you know, counterfactual reality of disappointments of, um, you know, not living up to, to what you want, but, uh, the ability to, to create, uh, an idea that you can draw people in, like the democratization of, uh, art through NFTs. Right? Um, I don't that was what made the money but that provides a narrative through which then certain people at certain points in this chain can make money and I think you always need both you need a kind of a of you know a story a narrative I think the narrative is really stupid but you have to acknowledge that it's very powerful and it's very powerful people with lots of resources to actually transform the world are driving each other into that and within this kind of there's you know

you know capitalist competition there's geopolitical competition all driving this into this one one direction and whether you think you have to go to to space to you know you have to go to space to um become a you know interplanetary civilization or because you want to win the next world war really in the end kind of drives the same thing thank you more questions you talked about this like implicit death wish in in this technology and you made me and and then you presented Sam Altman statement of like this energy expansion and I I was thinking like does his vision not contain another death death wish in the form of like excess heat, you know, like this energy expansion and then like all these energy transformations just like heat the planet up more, just will take longer. So I was, I wanted to ask, do you think that an expansionist technology like AI can ever shake this death wish? Cause like, like the vision he lays out as a solution, it's just like, he just relegates it to the future, right? Yeah. And this is this, we, we see that over and over people saying, yeah, we all know this is damaging the environment, but the future payoff is so great that we then can rectoactively engineer the mess away that we're doing now.

Right. And I think that in this strong version and the version that, that binds itself to all these cosmic, you know, extra planetary ideas. I don't think the death wish will. Will ever go away. That's really, for me, an answer to giving up on solving the problems we have and say, okay, this is a basket case. This will never work. You know, democracy is too annoying. There are too many people, you know, anything you, you can imagine. Let's just, you know, make a clean break and you know, go into space, go into virtuality. And then we have all these happy silicon based consciousness. And there will be so many that if you unhappy kind of, you know, carbon based, uh, consciousness that suffer are on balance worth it. On the other hand, maybe if I may also comment on this, I think that generative AI is basically not only, uh, a cult of entropy, but also a cult of being able to reverse entropy, right? Because. I think that the process of image production is based on basically partially reducing entropy or making image from noise, which is like maximum entropy. So being able to reverse that partially and to reverse entropy or to have control over entropy one way or another, right?

You can increase it. For example, Google said it was going to reactivate a nuclear power plant called three miles Island, which you blew up. It's one of the rest of the earth, but you could keep it. You could wrap it up once already, you know, in order to power their data center. But on the other hand you can also reverse entropy, right? And basically control the process of life or the getting older, which entropy is through these kind of process. Of course, that's a fantasy. Yeah, it's basically a carbon capture fantasy, right? So we can blow out everything because you know, I will massively create new breakthroughs. Yeah. What Altman says, we need a breakthrough, is basically we need something that doesn't exist and we don't know how to get there, but hope it will somehow arrive. I mean, just as a fun fact comment yesterday, by pure coincidence, I stumbled into the so-called Proto-Indo-Germanic, Indo-European language root for generative, which is GNH, and it has spawned so many words which basically refer to the same from naive to native to nature to genius to king to transgender, innate, indigenous, oxygen, gentrification, gentle, degenerate. All of these are basically part of the same cosmos of imagination that's tied to the word generative, right?

And we have both terms referring to nature and we have both terms referring to nature and we have both terms referring to nature. And things which are given and also to total construction and artificiality bound into one, but all of them are sort of combined under, let's say, the overarching topic of life and biopolitics and being able to control it or not, which I find super interesting when we talk about generative, that it opens up even as it wants to leave behind, you know, carbon-based bodies, et cetera. It is still. It is still obsessed with life and controlling life and nature. Okay. I'm sure there's one more question at least. I was just wondering if we're talking about the death wish, what can or should be done for kind of a shift of paradigms then? I think the key is this idea of the world exists of energy and information, and this is the only thing that matters. And I think we know quite well that this is not true. This is kind of this old, cybernetic touring fantasy of, you know, if you only get to a computer to print something on screen, then it's equivalent to a human being also printing on screens, even though human beings usually don't print on screens.

But if you abstract everything away and just have the information, I think the counterprogram, which of course is massively already underway, not with hundred billion investments in it, is really to try to rearticulate the material and rearticulate our entanglements and dependencies and involvement in the material. And I think it's necessary. We, there's a lot to learn from, you know, indigenous cultures, but it's also a need to do that on the scale that we actually act, which is a planetary kind of Anthropocene scale, and that will require, you know, a lot of work. And I think that's the key. And I think that's the key. And I think that's the key. And I think that's the key. And I think that's the key. And I think that's the key. That, in my view, requires new tools, new ways of understanding the world that are data-driven and need data to be articulated. So I'm not saying, you know, AI is evil, or we cannot use it, but they're extremely problematic drivers, and we have to kind of take out the pieces that I think are necessary to understand the world. To understand our current historical moment and try to combat and stop these very, very problematic aspects in it.

Okay. Thanks a lot, Felix. Thank you so much. Thanks a lot, Dan. This has been an excellent talk. And if anybody has any notes or other suggestions, feel free to also send them about to us. If anybody has any advice or assimilations or questions, received by Ed Smith, Hey Alex, Steve ord Aí, we'd love to hear from you. He linked in the comment section below this audio clip that I posted in the comments section below. But Celaio and Alex were thinking of sharing this lecture with you several times earlier when we were doing a local Oscillating course. So please also go ahead. I think this is put there. But I think this is… Esta ai, thanks to you.